Whew, it’s been a crazy few weeks. Between spring registration, Thanksgiving, and some significant projects in other classes, I haven’t been able to make as much progress on my PComp final as I had hoped. I don’t have a whole lot to present this week. It’s a bit of a shame, because I feel like the input I’ve gotten from my peers so far has been fairly helpful. Fortunately, though, I’ll have a couple more chances to present my project between now and the final presentation. I’m combining my ICM and PComp finals, so my ICM class next week will provide another much-needed user testing phase.

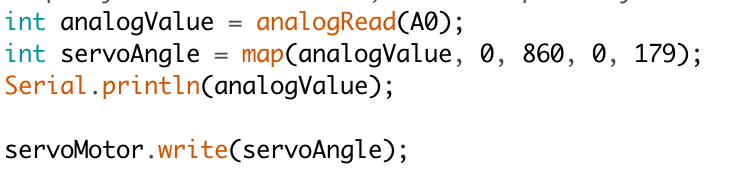

The most I can present at the moment is my daily schedule. I’m looking at this now and I’m nervous for how to get this done, but I still think that it’s doable.

Daily Schedule

Wednesday 11-28 – Talk through concept with class, ask about triggers. Tubing, acrylic, and lumber need to be purchased.

Thursday 11-29 – Syphon sketch into MadMapper needs to be up and working. Plan out any additional animation needed

Friday 11-30 – Finish CAD sketch. Program triggers for processing, send through MadMapper into secondary monitors. Finish Illustrator drawing for monitor mask.

Saturday 12-1 – Construction day. Timber needs to be cut and assembled. Acrylic needs to be cut on the laser cutter.

Sunday 12-2 – Off day to work on other projects.

Monday 12-3 – Assemble and test what you have for ICM next day.

Tuesday 12-4 – Present for ICM. See if concept is clear, ask what can be improved. Incorporate advice into project for PComp tomorrow.

Wednesday 12-5 – Present for PComp. Take in advice for concept and incorporate into project. Re-design as needed.

Thursday 12-6 – Re-arrange triggers as needed, animate creature as needed.

Friday 12-7 – Incorporate new animations into design.

Saturday 12-8 – Program LED strips in processing. Plug transistors into board and get them working for lighting control.

Sunday 12-9 – Off-day to work on other projects.

Monday 12-10 – Finalize project for ICM tomorrow.

Tuesday 12-11 – ICM final presentation. Take any input from ICM, finalize for PComp presentation.

Wednesday 12-12 – PComp final presentation.

Materials –

- Wood and particle board to build stand for computer/monitor

- Brackets and screws for stand

- Paint for stand?

- Tubes to represent characters feet

- LED strips to go into tubes

- Acrylic for monitor mask

- Projector – must be able to rent from ER